📊 Implications

1. The Shift to Deterministic Output

Problem: "Chat" interfaces encourage vague querying, leading to non-actionable "vibes" based responses.

Solution: Treat the LLM as a Junior Analyst. Do not ask for opinions; assign specific deliverables with defined schemas.

2. The Handshake Protocol

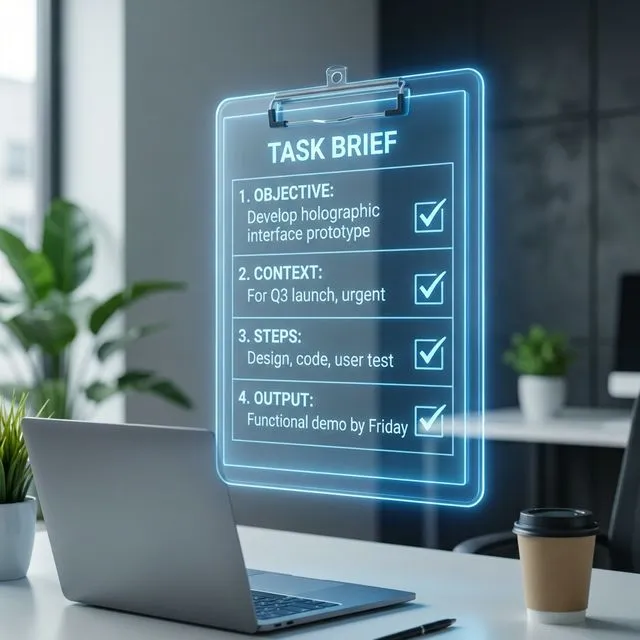

Every task assignment must satisfy the 4-part Handshake before execution begins.

3. The Task Template (JSON-S)

Use this schema for all complex requests. It forces constraint definition.

📄 TEMPLATE: STD-TASK-BRIEF

1. OBJECTIVE:

- [ ] Review Portfolio Copy

- [ ] Identify 3 weakest claims

2. CONTEXT:

- Target Audience: Tech Recruiters

- Tone: Confident, terse, quantitative

3. STEPS:

- READ input file

- EXTRACT claims

- CRITIQUE against "So What?" test

- REWRITE

4. OUTPUT_FORMAT:

| Original | Critique | Proposed Rewrite | Metric |

5. QUALITY_BAR:

- No buzzwords ("passionate", "innovative")

- Every rewrite must contain a number.

4. Efficiency Metrics

Adopting this protocol resulted in:

- Prompting Time: Reduced from 8m to 3m (Template Reuse).

- Re-roll Rate: Reduced by 60% (Clearer initial constraints).

Frequently Asked Questions

What is the AI Delegation Framework?

It's a structured protocol for assigning deterministic tasks to LLMs. Instead of vague conversational queries, you provide a 4-part "Handshake" — Objective, Context, Output Schema, and Quality Bar — that forces the AI to produce actionable work product instead of generic advice.

What is the difference between "Chat" and "Work Product"?

"Chat" is open-ended conversation that produces opinions. "Work Product" is constrained output with defined deliverables. The shift happens when you specify an Output Schema (JSON, table, checklist) and a Quality Bar (e.g., "no buzzwords," "every claim must contain a number").

How does the Task Template reduce re-rolls?

By defining constraints upfront — tone, audience, format, and rejection criteria — you eliminate the ambiguity that causes bad first outputs. This reduced re-roll rate by 60% and prompting time from 8 to 3 minutes in measured use.